Welcome!

This is the community forum for my apps Pythonista and Editorial.

For individual support questions, you can also send an email. If you have a very short question or just want to say hello — I'm @olemoritz on Twitter.

video edition

-

This is a wierd ctypes thing that is less documented than it should be...

if there is a symbol called someSymbol exported in a dll, you can use

somectypestype.in_dll(dll, symbolname)

and ctypes will cast the address of the symbol to the type you tell it. In pythonista,objc_util.cis the only dll we need or can use. Often in objc you would use c_void_p.in_dll to access for example const NSString *, then use ObjCInstance to convert to an NSString. In this case I already had the structure defined.Not all constants are actually exported as symbols, some are just #defines.

Btw, you can use mp4s,whatever, instead of the hardcoded url use

asset = photos.pick_asset(assets) phasset=ObjCInstance(asset) asseturl=ObjCClass('AVURLAsset').alloc().initWithURL_options_(phasset.ALAssetURL(),None)If I may ask, what is your end goal? Depending on what sort of processing you are doing, there are different writing options

-

@JonB thanks for the detailed explanation

My goal is not very well defined. I kind of dream about using pythonista to analyse videos to do superresolution, object tracking, dynamic 3d modeling, etc... Still a long way to go. I just think the ipad is an extraordinary tool if you can bend it to your will. Pythonista seems to enable that, with very low coding skills requirements (i mean: that match mine, and with the help of super-pro coders like you), i find that awsome and i am trying to see how far it can go.here is the last version of my code

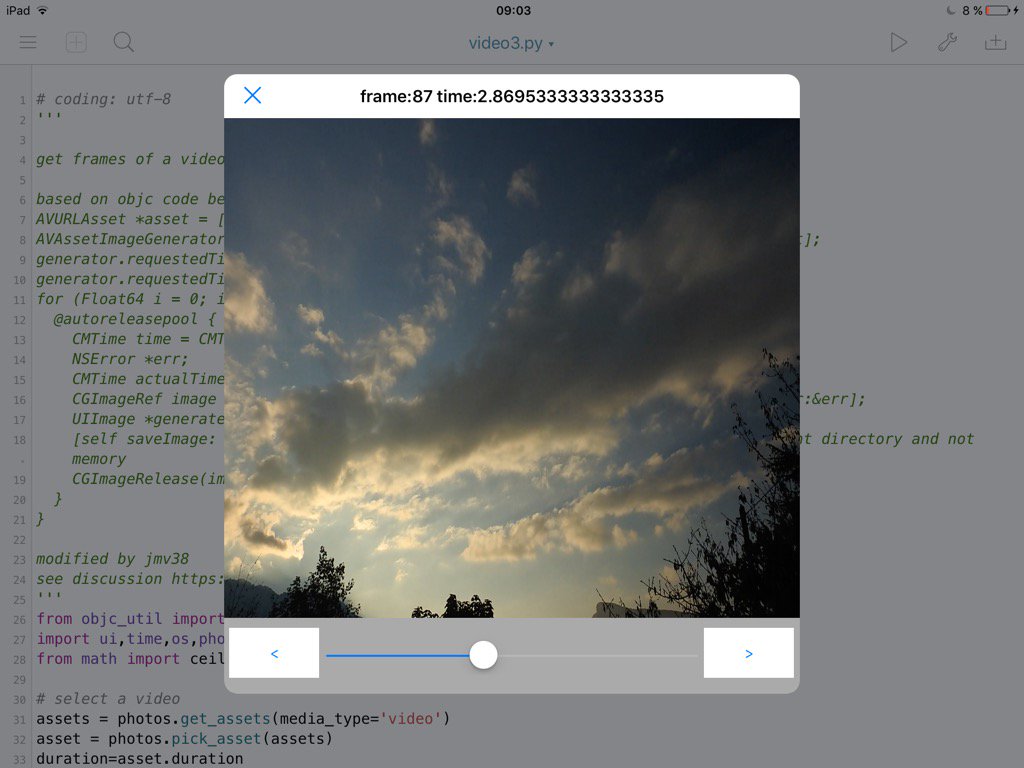

# coding: utf-8 ''' get frames of a video, and show them in an imageview. based on objc code below AVURLAsset *asset = [[AVURLAsset alloc] initWithURL:url options:nil]; AVAssetImageGenerator *generator = [[AVAssetImageGenerator alloc] initWithAsset:asset]; generator.requestedTimeToleranceAfter = kCMTimeZero; generator.requestedTimeToleranceBefore = kCMTimeZero; for (Float64 i = 0; i < CMTimeGetSeconds(asset.duration) * FPS ; i++){ @autoreleasepool { CMTime time = CMTimeMake(i, FPS); NSError *err; CMTime actualTime; CGImageRef image = [generator copyCGImageAtTime:time actualTime:&actualTime error:&err]; UIImage *generatedImage = [[UIImage alloc] initWithCGImage:image]; [self saveImage: generatedImage atTime:actualTime]; // Saves the image on document directory and not memory CGImageRelease(image); } } modified by jmv38 see discussion https://forum.omz-software.com/topic/3621/video-edition/1 ''' from objc_util import * import ui,time,os,photos,gc from math import ceil # select a video assets = photos.get_assets(media_type='video') asset = photos.pick_asset(assets) duration=asset.duration # init frame picker phasset=ObjCInstance(asset) asseturl=ObjCClass('AVURLAsset').alloc().initWithURL_options_(phasset.ALAssetURL(),None) generator=ObjCClass('AVAssetImageGenerator').alloc().initWithAsset_(asseturl) from ctypes import c_int32,c_uint32, c_int64,byref,POINTER,c_void_p,pointer,addressof, c_double CMTimeValue=c_int64 CMTimeScale=c_int32 CMTimeFlags=c_uint32 CMTimeEpoch=c_int64 class CMTime(Structure): _fields_=[('value',CMTimeValue), ('timescale',CMTimeScale), ('flags',CMTimeFlags), ('epoch',CMTimeEpoch)] def __init__(self,value=0,timescale=1,flags=0,epoch=0): self.value=value self.timescale=timescale self.flags=flags self.epoch=epoch c.CMTimeGetSeconds.argtypes=[CMTime] c.CMTimeGetSeconds.restype=c_double kCMTimeZero = CMTime.in_dll(c,'kCMTimeZero') generator.setRequestedTimeToleranceAfter_(kCMTimeZero, restype=None, argtypes=[CMTime]) generator.setRequestedTimeToleranceBefore_(kCMTimeZero, restype=None, argtypes=[CMTime]) lastimage=None # in case we need to be careful with references fps = 30 tactual=CMTime(0,fps) #return value currentFrame = 0 maxFrame = ceil(duration*fps) def getFrame(i): t=CMTime(i,fps) cgimage_obj=generator.copyCGImageAtTime_actualTime_error_(t,byref(tactual),None,restype=c_void_p,argtypes=[CMTime,POINTER(CMTime),POINTER(c_void_p)]) image_obj=ObjCClass('UIImage').imageWithCGImage_(cgimage_obj) ObjCInstance(iv).setImage_(image_obj) global lastimage lastimage=image_obj #make sure this doesnt get gc'd gc.collect() # to avoid pytonista random exit sometimes root.name=' frame:'+str(i)+' time:' +str(tactual.value/tactual.timescale) # set up a view to display root=ui.View(frame=(0,0,576,576)) iv=ui.ImageView(frame=(0,0,576,500)) root.add_subview(iv) # a slider for coarse selection sld = ui.Slider(frame=(100,500,376,76)) sld.continuous = False root.add_subview(sld) def sldAction(self): global currentFrame currentFrame = int(duration*self.value*fps) getFrame(currentFrame) sld.action = sldAction # buttons for frame by frame prev = ui.Button(frame=(5,510,90,50)) prev.background_color = 'white' prev.title = '<' def prevAction(sender): global currentFrame currentFrame -= 1 if currentFrame < 0: currentFrame = 0 sld.value = currentFrame / fps / duration getFrame(currentFrame) prev.action = prevAction root.add_subview(prev) nxt = ui.Button(frame=(480,510,90,50)) nxt.background_color = 'white' nxt.title = '>' def nxtAction(sender): global currentFrame currentFrame += 1 if currentFrame > maxFrame: currentFrame = maxFrame sld.value = currentFrame / fps / duration getFrame(currentFrame) nxt.action = nxtAction root.add_subview(nxt) root.present('sheet') getFrame(currentFrame) -

oh, sorry, i misread you question

Depending on what sort of processing you are doing, there are different writing options

i intend to do numpy processing with float or complex, and when the processing is done, fetch back to RGB 24bits (or whatever format required to get a video)

-

the above code gives this screenshot:

-

Hello @JonB

If I may ask, this message is just to know if you intend to look at the video writing problem? (I dont mean to harass you on that subject, you've already been a terrific help, thank you so much, this is just to know f you are working on it or not, to adjust my own coding plans with what you plan to share). Thanks! -

@jmv38 I think what you probably are going to want is an AVVideoComposition, which lets you use standard or custom CIFilters to process a movie.

I am playing around with getting AVAssetReader workng, though I might not get a lot of time to work on this for a few weeks

-

Thanks for your answer @JonB

I had a look at apple's doc on videocomposition. It is way over my head, so I'll postpone this part of my project to an indefinite date. And for now I'll focus on the many other parts that still need to be written, and are more within my reach...

Thanks! -

btw, in the meantime, you should be able to access the imageview.image to get a ui.image, which you can the convert to jpg or png to write to a sequence of files, or PIL image for processing, etc.

-

Correct. This is what i really needed in the first place. The video writing capability is just the 'cherry on the cake'.

-

i found this link http://stackoverflow.com/questions/5640657/avfoundation-assetwriter-generate-movie-with-images-and-audio

The very first bloc of code in this topic is sufficient i think (i dont want to alter audio).

Is it possible to embed the whole code and pass it just the video and the image? Or do I have to call each line with objc utils? -

This post is deleted! -

@SandraGarrett more info on the

.in_dll()method here -

This post is deleted! -

This post is deleted! -

I'd like to be able to get a video from the photos module or a local .mov file and show a given frame in the console.

There seems to be 2 ways to do this but neither is working for me yet.

I tried running this:

https://github.com/jsbain/objc_hacks/blob/master/getframes.py

But the resulting view is just a transparent overlay.

It seems to me that this returns a 'NoneType' object.

cgimage_obj=generator.copyCGImageAtTime_actualTime_error_(t,byref(tactual),None,restype=c_void_p,argtypes=[CMTime,POINTER(CMTime),POINTER(c_void_p)])

type(cgimage_obj)

<class 'NoneType'>It also looks like the photos module has a .seek function I am unable to get that to show me anything other than frame 1 using get_image().

Are there any working examples of this?

Thanks so much for your help.

-

Did you try the modified version of the code posted by @jmv38 at the top of this thread?

The next thing to do would be to step through each line and see if you are getting objects back. For instance, is generator valid, etc. I'll have to check, but some of these methods may be depreciated now.

-

Hi. Thanks for the reply.

Yea I did. It has a similar result with a transparent view and not able to see any images. I’ve started going through your code line by line but I’ve only just starting to use objc_util and there are other things you are doing that are new to me.

My concern is that it isn’t possible anymore and I’m going down the wrong path.

-

@Pythonised In IOS 16/iPadOS 16, you will have Image at to replace deprecated copyCGImageAtTime_actualTime_error_

-

This post is deleted! -

This post is deleted!